Mining Other

Your mining supply agreement was written for humans, but your trucks are driving themselves (Part 1)

In August 2025, in Benavides v Tesla, Inc (Benavides), a Miami jury awarded USD240-million in damages against Tesla, including USD200-million in punitive damages, in a wrongful death lawsuit related to its Autopilot system. The jury found that Tesla’s marketing created a misleading perception of safety, encouraging drivers to rely on a system not designed for certain conditions.

The verdict arose in the automotive context, but the liability questions at its centre – namely, when does a manufacturer answer for what its autonomous system did, and what must it disclose about the system’s limits – are the same questions that South African mining operations will face as AI-integrated yellow plant equipment becomes standard.

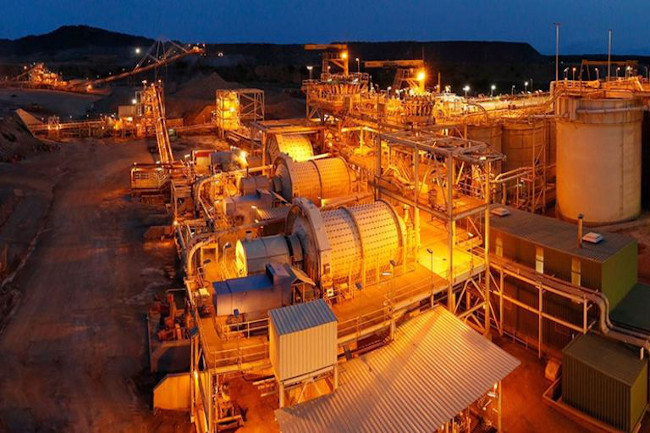

The deployment of autonomous systems and AI-powered machinery in African mining is accelerating. The contractual arrangements governing these deployments have not kept pace. Most supply agreements for AI-integrated equipment still closely resemble plant hire contracts from ten years ago: bilateral, human-focused, and silent on who bears the risk when a machine decides on its own. That silence will produce disputes, and the agreements in their current form are not equipped to resolve them.

Gaps fuelling AI disputes

Force majeure and foreseeable risk: In 2019, at BHP’s Jimblebar mine in Australia, two autonomous trucks collided during heavy rainfall, an incident BHP attributed to heavy rainfall deteriorating road surfaces, though regulators noted the system had not been programmed to limit speed in such conditions. A separate driverless truck collision occurred at Fortescue Metals, also in Australia, within the same period. At Escondida in Chile, the world’s largest copper mine, a union flagged what it called a huge risk to worker safety, less than one month after BHP completed a five-year autonomous vehicle rollout. And so, a pattern emerges: AI systems fail in response to conditions the operating environment routinely produces. These failures occurred in comparatively controlled mining environments.

South African operations contend with load-shedding and chronic connectivity disruptions, among other issues, as defining features of the landscape, conditions that are more volatile, less predictable, and to which any AI-dependent system will inevitably be exposed. Supply agreements include broad force majeure clauses, potentially broad enough for a supplier to try to characterise a system failure triggered in this manner as an unforeseeable event beyond its control and thereby disclaim liability entirely. But where those conditions are endemic and well-documented, that characterisation is difficult to sustain and any well-drafted agreement should foreclose it expressly.

Indemnities and the absent human: Standard indemnities are constructed around the acts or omissions of a supplier’s personnel. When an autonomous system decides without human instruction, the supplier has a credible argument that no identifiable act or omission of its personnel caused the harm and that the indemnity therefore does not apply. Benavides exposed precisely this gap: even where a human operator was present but distracted, the court held that the manufacturer’s design choices independently contributed to the loss. The implication is that liability attached not because a person failed, but because the system was designed in a way that allowed failure. Most indemnity clauses in current supply agreements are not drafted to capture that distinction, and until they are, the risk of an unindemnified loss arising from an autonomous decision remains unresolved.

Production downtime: Where AI systems become a linchpin of operations, their failure can cause significant financial loss without any physical damage occurring at all. That loss is consequential in nature and routinely excluded by standard limitation clauses. The insurance position compounds the problem, as most business interruption policies respond only where the loss follows physical damage to insured property. Where an AI system causes production downtime without any such damage such as a software malfunction, a failed autonomous routing decision, a sensor misread, there is no physical damage trigger, and the BI policy is unlikely to respond. Even where a mine holds broader cover, losses of this kind may fall squarely within a cyber-loss or computer systems failure exclusion. The result is that for mines operating under production targets and offtake obligations, unplanned AI-caused downtime is a core commercial exposure that is neither covered by the supply agreement nor insured against, and that gap needs to be closed contractually before it is tested in practice.

The OEM that is not at the table: Supply agreements are typically bilateral. The Original Equipment Manufacturer (OEM) that designed the AI system, controls its training data, and deploys software updates, is usually not a party. Section 61 of the Consumer Protection Act imposes strict liability on every participant in the supply chain, producer, importer, distributor and retailer, for harm caused by defective or unsafe goods. That liability applies to mining companies notwithstanding the juristic person threshold in section 5(2)(b). But section 61 requires a supplier-consumer relationship, and where the OEM is a foreign entity with no South African presence and no direct transactional link to the mine, that chain may not extend far enough. This means enforcing strict liability against the party who designed and trained the AI system is a considerably more demanding exercise than enforcing a back-to-back warranty against a local supplier.